If you're extracting Armenian text from images with an LLM, use

gemini-3-flash-previewwithtemperature: 0. Every other model I tested (claude-haiku-4-5,claude-sonnet-4-6,gpt-5-mini,gpt-5.4-mini) has a categorical weakness that makes it unusable for stylized fonts or specific glyph pairs. And it turns outgemini-3-flash-previewat its default temperature (1.0) silently garbles Armenian ~22% of the time on exactly the same image it handles perfectly at temperature 0.

The setup

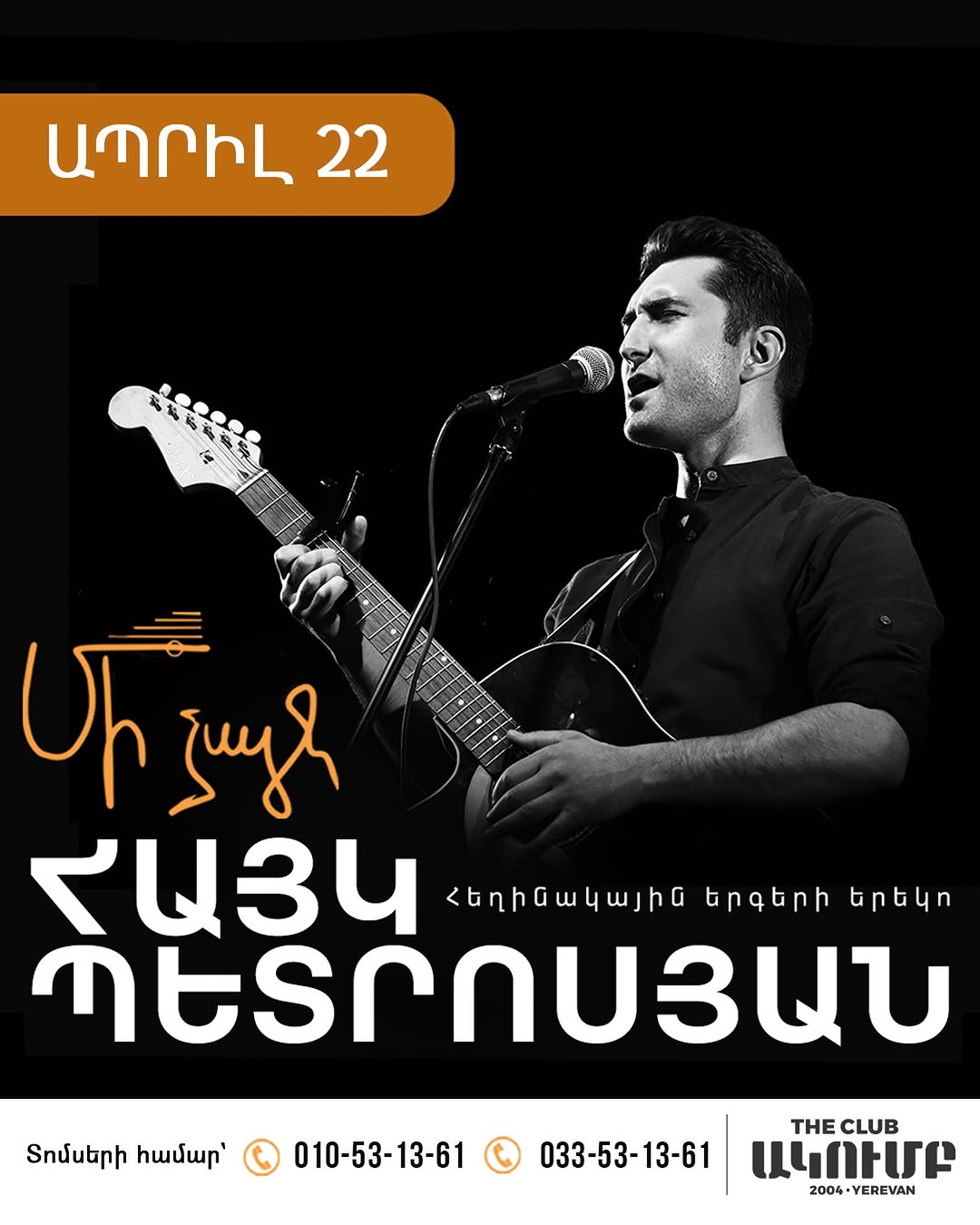

Our event pipeline ingests Instagram posts from Armenian event organizers. One post (a concert poster for "Hayk Petrosyan") got stored with a garbled media description — ՀԱՅԱ ՁԵՏՐՈՄՅԱՆ instead of ՀԱՅԿ ՊԵՏՐՈՍՅԱՆ — and I wondered if it was a one-off hallucination or a systematic problem. Answer: both. Case-insensitive whitespace-normalized token matching, scored against 6 tokens on the poster (date, stylized title, performer name, subtitle, tickets-for label, venue name).

The surprising finding: temperature fixes everything

First observation: the same poster image produces different garbled versions across runs — ԱՂՐԷԱ, ԱՃՐԷԱ, ԱՄՐԲԴ instead of ԱՊՐԻԼ. Each failure is different, but not random — they're visually-similar-glyph confusions (Պ↔Ղ, Ի↔Է, Ս↔Մ, Տ↔Ճ), the same mistakes a person would make on stylized fonts.

The tell: every failure is different, but every success is identical and perfect. That's not how "the model can't read Armenian" looks — that would be the same wrong answer every time. It looked like sampling noise.

Which sent me back to a setting I'd honestly forgotten existed: temperature. gemini-3-flash-preview's default is 1.0 — high enough that on tokens where the model is uncertain (small stylized Armenian glyphs, in this case), it rolls the dice between visually-similar candidates instead of committing to its most likely read. Setting temperature: 0 collapses the decoder to its greedy answer.

50/50 perfect runs, 100% on all 6 tokens, no garble.

One line of config. Months of "LLMs are just flaky" chalked up to a default we never reviewed.

The other surprise: newer ≠ better

Everything I'd read online said gpt-5.4-mini should blow gpt-5-mini out of the water — it's the newer model, and the benchmarks back that up. On this task, nope:

gpt-5-miniwithreasoning: minimal— 3.4s latency, $0.81/1k calls, 90% critical passgpt-5.4-miniwithreasoning: low— 8.8s latency, 5% critical pass

At low reasoning, gpt-5.4-mini "hedges" — it ignores the transcription instruction 70% of the time and returns just a visual description. You have to crank up to medium to force it to commit, which costs $17/1k (vs $5/1k for gpt-5-mini medium). The older, cheaper model is strictly better here.

Other notable findings

- Neither OpenAI mini can read stylized Armenian cursive — 0/40 runs combined got the

Մի ձայնhandwritten title. They nail block text but go blind on decorative fonts.gemini-3-flash-previewgets it 100% at temp=0. claude-haiku-4-5ignored the transcription task entirely — returned only visual descriptions ("a man with a guitar"), 0/10.claude-sonnet-4-6tries hard but has systematic glyph confusions thatgemini-3-flash-previewdoesn't share:ՀԱՅԿ→ՀԱԿՈԲ(Hayk → Hakob),Մ→Ս.gemini-3-flash-previewwithmediaResolution: high(1120 tokens/image vs default) did not help — slightly hurt accuracy at n=20.gemini-3-flash-previewwiththinking_level: HIGHalso did not help meaningfully (76% all-6 vs 78% at LOW) and costs 2.85× more.

Full results

| Model | Reasoning | Temp | n | Date Ապրիլ | Title Մի ձայն | Name ՀԱՅԿ ՊԵՏՐՈՍՅԱՆ | Subtitle հեղինակային երգերի երեկո | Tickets Տոմսերի համար | Venue Ակումբ | All 6 | Latency | Cost/1k |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

gemini-3-flash-preview | LOW | 0 | 50 | 100% | 100% | 100% | 100% | 100% | 100% | 100% | 6.0s | $1.05 |

gemini-3-flash-preview | LOW | 1 | 50 | 80% | 94% | 80% | 88% | 80% | 96% | 78% | 6.2s | $1.23 |

gemini-3-flash-preview | HIGH | 1 | 50 | 90% | 90% | 90% | 76% | 90% | 90% | 76% | 9.1s | $3.58 |

gemini-3-flash-preview | LOW · hi-res | 1 | 20 | 70% | 90% | 70% | 80% | 70% | 95% | 70% | 6.5s | $1.33 |

gpt-5-mini | medium | — | 10 | 100% | 0% | 100% | 40% | 100% | 40% | 0% | 27.6s | $4.96 |

gpt-5-mini | minimal | — | 20 | 90% | 0% | 100% | 10% | 85% | 15% | 0% | 3.4s | $0.81 |

gpt-5.4-mini | medium | — | 10 | 100% | 0% | 100% | 70% | 100% | 50% | 0% | 28.6s | $17.21 |

gpt-5.4-mini | low | — | 20 | 15% | 0% | 10% | 0% | 15% | 0% | 0% | 8.8s | $4.44 |

gpt-5.4-mini | none | — | 10 | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 2.3s | $1.74 |

claude-haiku-4-5 | — | — | 10 | 0% | 0% | 0% | 0% | 0% | 0% | 0% | 1.4s | $2.84 |

claude-sonnet-4-6 | — | — | 10 | 100% | 0% | 0% | 90% | 80% | 50% | 0% | 8.9s | $11.07 |